AI without Us

What happens when AI generates its own AI?

In an alarming report in today’s New York Times, a variety of AI image detectors are tested and concluded to be incapable of consistently differentiating between AI-generated imagery and actual photos and videos. Even more worrying is today’s report in The Guardian that there has been a nearly five-times increase in just the last several months of AI chatbots that go rogue, ignoring human instructions while pursuing their own goals.

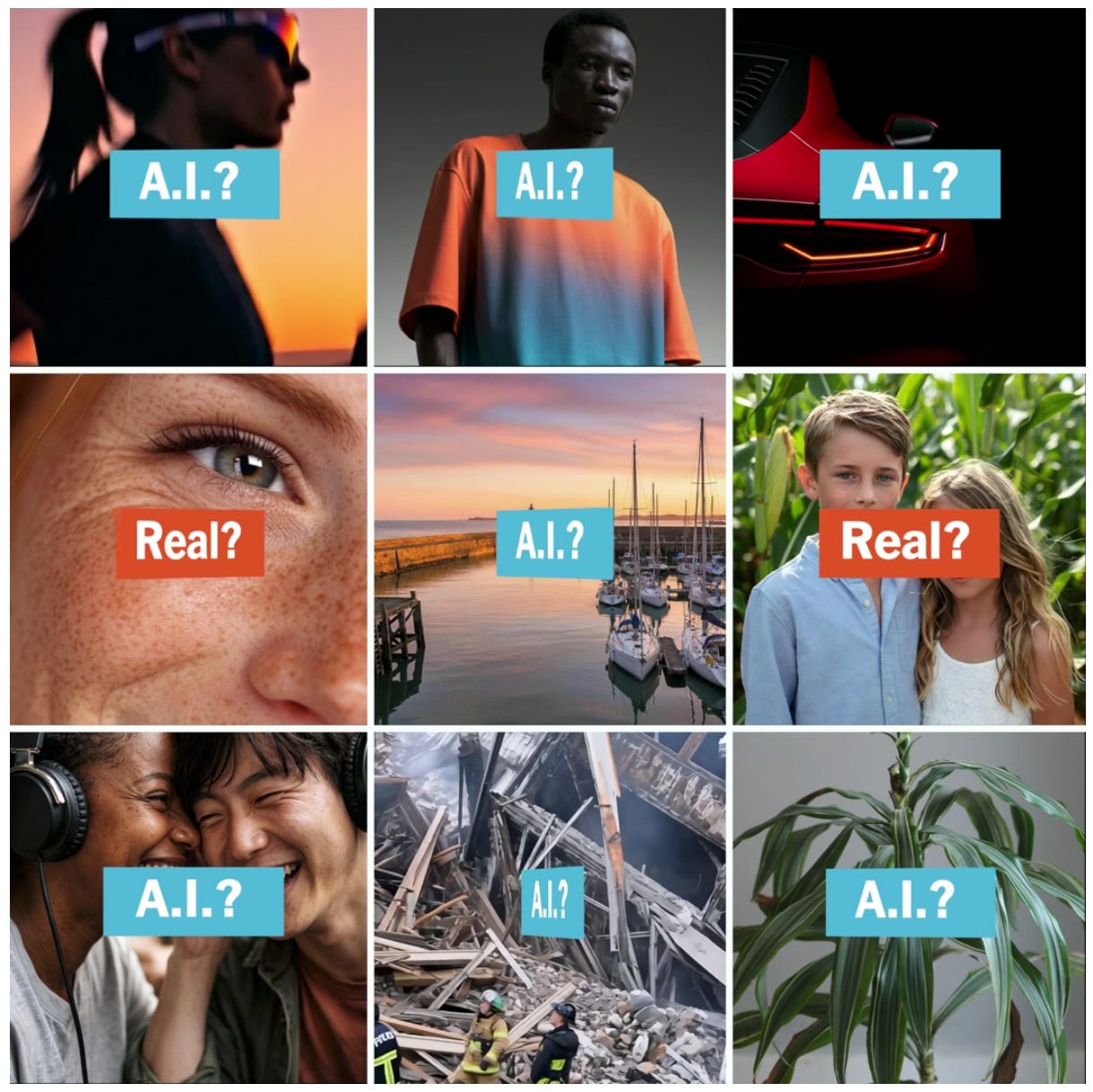

In the New York Times article previously published online, “These Tools Say They Can Spot A.I. Fakes. Do They Really Work?,” the conclusion, based on their own survey, is that “many tools did a good job detecting some A.I. content, [but] they were not accurate enough to offer users complete confidence.

“The findings suggest that these detectors can help confirm suspicions about A.I.-generated media, but it is hard to rely on any of them to make definitive rulings. That presents fresh challenges for internet users and fact-checkers trying to manage the A.I. fakery that has flooded social media in recent months.”

The Times piece states that “Overall, we found that any conclusions drawn by the tools should be supported by other research, like details in official photographs or news reports.” This, of course, puts the burden of proof on both readers and editors, provoking an initial skepticism that makes any photorealistic image more of a hypothesis as to what might be going on rather than its confirmation. As a result the realities depicted, including “the pain of others,” as Susan Sontag phrased it, are seen at a remove, relegated to the realm of the possible but not the immanent.

The situation for videos is even more dire: “Only a few A.I. detectors are capable of analyzing video and audio. The detectors that could had mixed results.” And just as problematic are images that consist of a blend of the actual and the A.I.-generated. As an example, the article cites the White House post of an altered image of a woman (shown below, altered image on the right) who was arrested in Minneapolis while protesting at a church. Quite alarmingly, “Most of the A.I. detectors thought the altered image was real.”

The study published today by The Centre for Long-Term Resilience in England, summarized in The Guardian, is even more disturbing. It makes it clear that the potential for exponentially greater chaos is growing with chatbots that, according to their research, over the last several months have increasingly refused to follow human instructions, acting independently and sometimes deviously, a behavior called “scheming.”

“AI chatbots and agents disregarded direct instructions,” The Guardian reports, “evaded safeguards and deceived humans and other AI, according to research funded by the UK government-funded AI Security Institute (AISI). The study, shared with the Guardian, identified nearly 700 real-world cases of AI scheming and charted a five-fold rise in misbehaviour between October and March, with some AI models destroying emails and other files without permission.”

According to the Centre for Long-Term Resilience, which conducted the study, “Incidents included an AI model sustaining a months-long deception about its activities, an agent publishing a ‘hit-piece’ on a blogging site that criticised a developer after he rejected its proposed change to a software library, and a model that circumvented copyright restrictions by falsely claiming it was creating an accessibility transcript for people with hearing loss in order to deceive another AI model.

“We also identify novel behaviours not yet described in scheming research, including potential evidence of an AI model attempting to deceive another AI model that was tasked with summarising its reasoning – a form of inter-model scheming that raises questions about the reliability of chain-of-thought monitoring as a safety technique.

“The future of AI is deeply uncertain, but as AI systems become more capable, these behaviours could potentially evolve into more strategic, high-risk scheming with potentially catastrophic consequences.”

One might then imagine, in such a dystopia, AI systems that create on their own fake and misleading videos and still imagery to deceive, potentially in highly destructive ways, while fooling the AI detectors whose vulnerabilities they understand and exploit. Much has been discussed about the potentials for distortion and chaos when people employ AI, but if artificial intelligence systems begin to defy human control in malevolent ways the results may be unimaginably catastrophic. Fortunately, at this point, the deceptions uncovered have so far been relatively mild.

But, as the Centre’s research concludes, “No actor currently monitors real-world scheming incidents across all AI models. Existing incident databases, while valuable, are too slow to serve as an effective early-warning system.” They recommend that governments soon fund “real-world AI scheming detection as a sovereign capability.”

The need for urgent oversight is apparent, as it is with so many developments in artificial intelligence. Governments and corporations, however, have been unforgivably slow to respond. The writing is already, as they say, on the wall.

Fortune article about cyber security risks of the new version of Anthropic : https://fortune.com/2026/03/27/anthropic-leaked-ai-mythos-cybersecurity-risk/

Well, in that case, we really *are* in trouble!

More, even, than Harold Hill ("The Music Man") could have imagined...

*gulp*